- AppSheet

- Tips & Resources

- Tips & Tricks

- Use Google Cloud Generative AI Image Models in App...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi all,

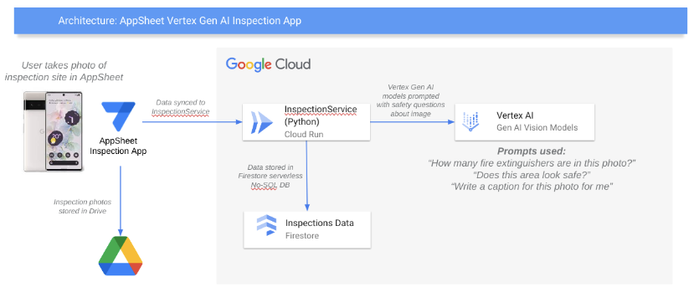

Here is a simple guide to use Google Cloud Visual Question Answering Vertex AI models in AppSheet. Until there is a direct integration, the simplest way that I have found is to use a bit of serverless code behind an API in Google Cloud to do the integration, which is also a good way of using any advanced or new features in AppSheet apps.

This demo was featured in the Google Cloud Next 2023 developer keynote (along with some amazing music at the beginning), so watch the session on Youtube for a walkthrough of the solution in action.

And yes, this involves some code in a no-code app, but the purpose of this guide is to show how it can be done very simply with automatic deployment to Cloud Run, and enabled to be reused in many AppSheet apps.

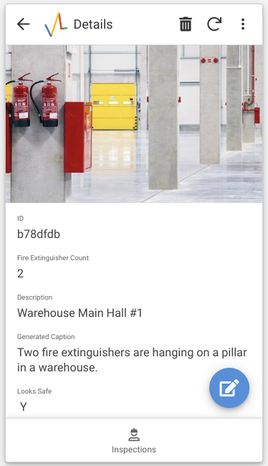

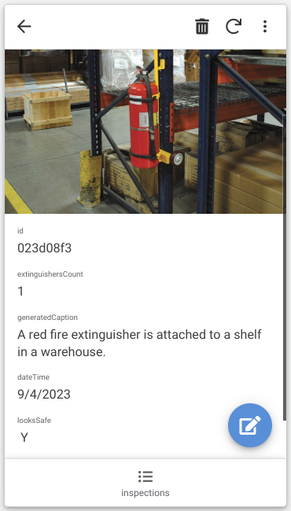

The use-case demonstrated here is to use Vertex AI image models to analyze uploaded images in an AppSheet app for safety and compliance, so for example to check that fire extinguishers are present. But in the end you can use the Vertex AI models to ask any questions about an image (counting people or objects, asking if an image contains certain objects, etc..).

The fields above were generated with simple prompts like "How many fire extinguishers are in the photo?" and "Generate a caption for the photo," but you can use any prompts you like for any use-case.

Here's an architecture diagram of how the solution works:

The project used is here on Github for anyone to try out: https://github.com/tyayers/appsheet-cloud-inspection-demo.

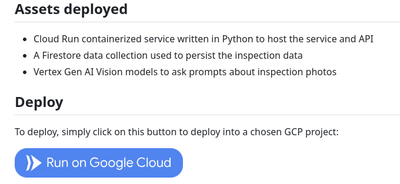

Prerequisites: You must have a Google Cloud project with the organizational policy "Domain Restricted Sharing" turned off so that AppSheet can access a public API in the project. This deployment uses the Google Cloud services Cloud Run, Firebase and Vertex AI. Some costs could accrue if you use the service a lot, but generally since the services are serverless with free tiers, normal usage should be low to free.

Step 1: Open the Github repository above, and click on "Run on Google Cloud".

This will deploy the serverless function that offers the image model features from Google Cloud Vertex AI to our AppSheet app via an API. It is an all-in-one deployment - just one container deployed to Google Cloud Run and we're ready to go.

Confirm the deployment, and that you trust the repository (be sure to test in a sandbox where no production or user data is located, and if you want to use this is in production, clone the repository and make sure it complies with your security practices before deployment).

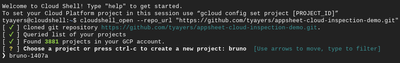

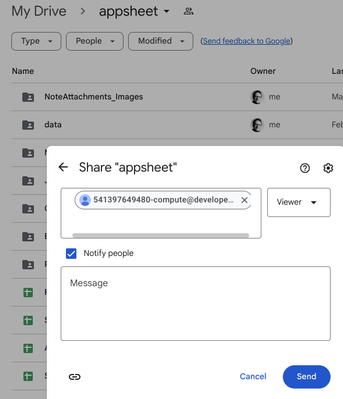

Step 2: Choose a Google Cloud project and region to deploy to.

I will deploy it to a project called bruno that I use for testing.

And choose basically any region that you would commonly use.

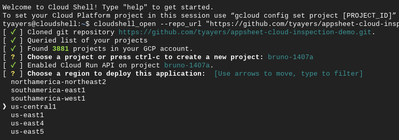

Now the service will be deployed to Cloud Run, and the default compute user that the service runs as will get the role assigned to be able to save data to Firestore (a serverless database) and call the Vertex AI APIs.

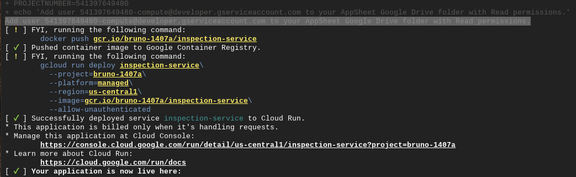

Step 3: Give service account READ access to Google Drive folder where Appsheet data is stored

Because we will need to have access to the images that users upload to the app to send them to Vertex AI, we need to manually give the service account user access to our Google Drive folder. Check out a message in the deploy output that says "Add user 994329423-compute@developer.gserviceaccount.com to your AppSheet Google Drive folder with Read permissions."

Copy the email address, go to Google Drive and find the appsheet directory where data is stored, and share the folder with the email address with Viewer rights. Later you can also just add this to the app directory to reduce access to other app data.

Step 4: Copy the service URL from the deployment text

Copy the URL under the text "[ ✓ ] Your application is now live here:" in the deployment window (it will be something like https://inspection-service-fdu8fuds-uc.a.run.app)

Step 5: Add service as data source in AppSheet

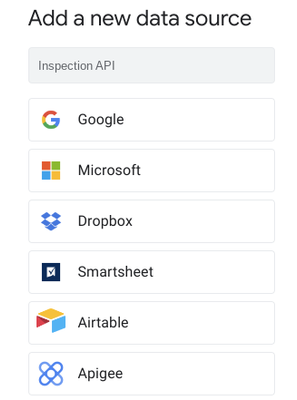

Now go to your AppSheet Account Data Sources page, and add a new Data Source with the name Inspection API and type Apigee.

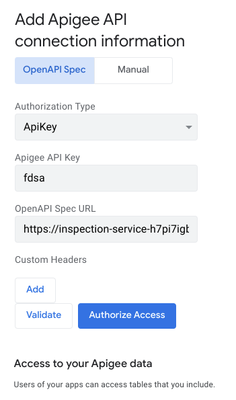

Enter anything in the Apigee API Key field (it's not checked in the demo source code), and paste the Service URL in the OpenAPI Spec URL field and press Validate and then Authorize Access.

Step 6: Create a new app based on Inspection API

Now create a new app based on existing data, and select the newly created Inspection API > inspections.

After the app has been created, you should have a new app with the test data. Go to the data configuration in the app for the inspections table, and mark the fields extinguishersCount and looksSafe to Not Required.

Then add a new record in the app preview window, and upload any picture that you have. After a few seconds you should get a generated caption for the photo, as well as the number of fire extinguishers that were found in the photo, and if the scene generally looks safe. These are just example prompts - you can change them or add different ones in the source code of the service (see the Github repo for details).

Just reply or reach out here with any questions, happy to help with these kinds of AppSheet use-cases that can bring in advanced cloud features like Vertex Generative AI image models into AppSheet apps.

- Labels:

-

Intelligence

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This is approach is a game changer for companies trying to surface real-time services and core business data to AppSheet.

Thanks for publishing the `code` and how-to 🙌

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Agree, it opens so many possibilities, and using Cloud Run the technical code part is really minimal and extremely reusable for all kinds of apps..

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for sharing this. I agree with your comments about the reusability. I have another AppSheet use case in mind. Can a GLB or GLTF 3D file be viewed in the application? Something similar to Google 3D Modeler Viewer?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi, I don't know.. if the objects can be rendered in a normal web page, then it should be possible to render them in the app (have seen SVG and other web objects gerendered)..

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

All the best,

Ronnie Parsons

Founder, CEO

[c] (914) 482-8201

[w] (503) 278-5790

Book a meeting with me <>

Mode Lab, LLC

PO Box 96007

Portland, OR 97296

http://modelab.design

CONFIDENTIALITY NOTICE

This e-mail and any files transmitted with it are private, confidential,

and solely for the addressee's use. It may contain legally privileged

material. If you are not the addressee or the person responsible for

delivering to the addressee, be advised that you have received this e-mail

in error and that any use of it is strictly prohibited.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

when are we getting firestore into appsheet.

-

Account

6 -

App Management

21 -

Automation

187 -

Data

140 -

Errors

19 -

Expressions

206 -

Integrations

104 -

Intelligence

18 -

Other

57 -

Resources

24 -

Security

14 -

Templates

56 -

Users

20 -

UX

219

Twitter

Twitter