- AppSheet

- AppSheet Forum

- AppSheet Q&A

- Digital Increment Bug

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello everyone,

I have an app working perfectly for a year now (thank you so much for the amazing product).

But for a week now, I have a bug with one of the columns, which apeard out of the blue.

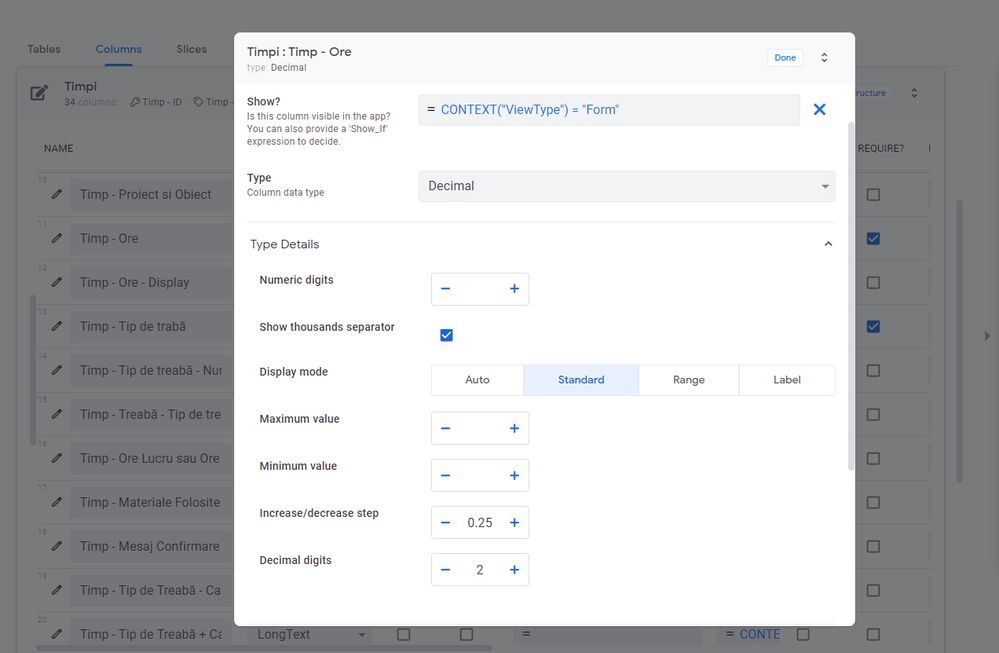

The app is for time tracking, and when a user fills in the form for the time, he puts in the value for how many hours he spent on a task. That is done with a Decimal column Type, using an increase/decrease step of 0.25 and thus, two decimal digits.

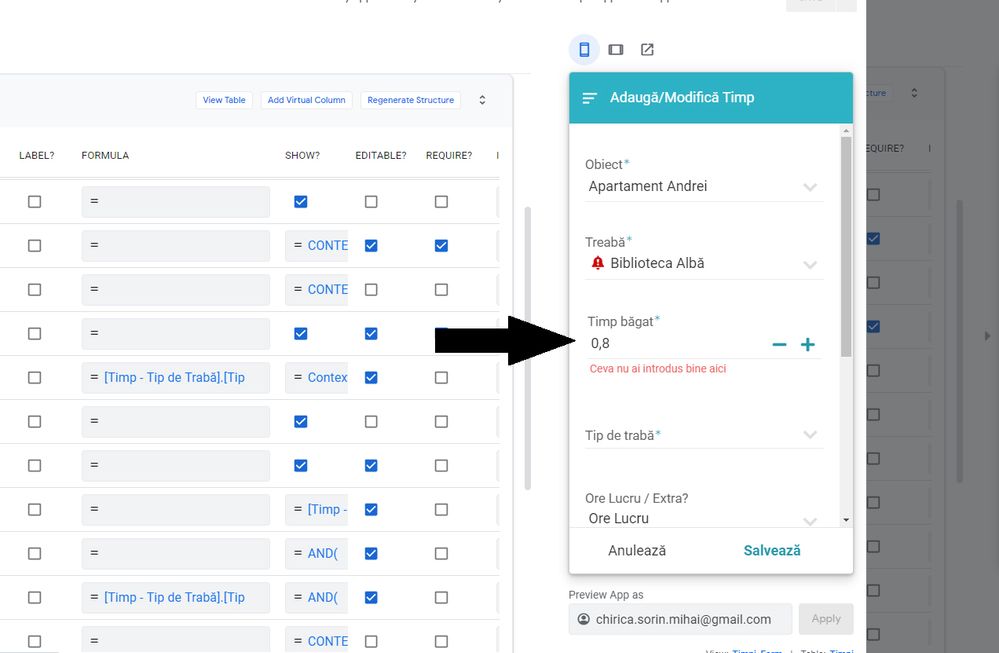

So when the user hits the + sign, he starts from 0 and goes up through

- 0.25 - 0.5 - 0.75 - 1 - 1.25 and so on.

And this worked perfectly until a week ago when, as you hit the + button it goes through - 0.25 - 0.5 - 0.8 - 1.00 - 1 - 1.25 - 1.5 - 1.8 - 2.00 - 2 and so on.

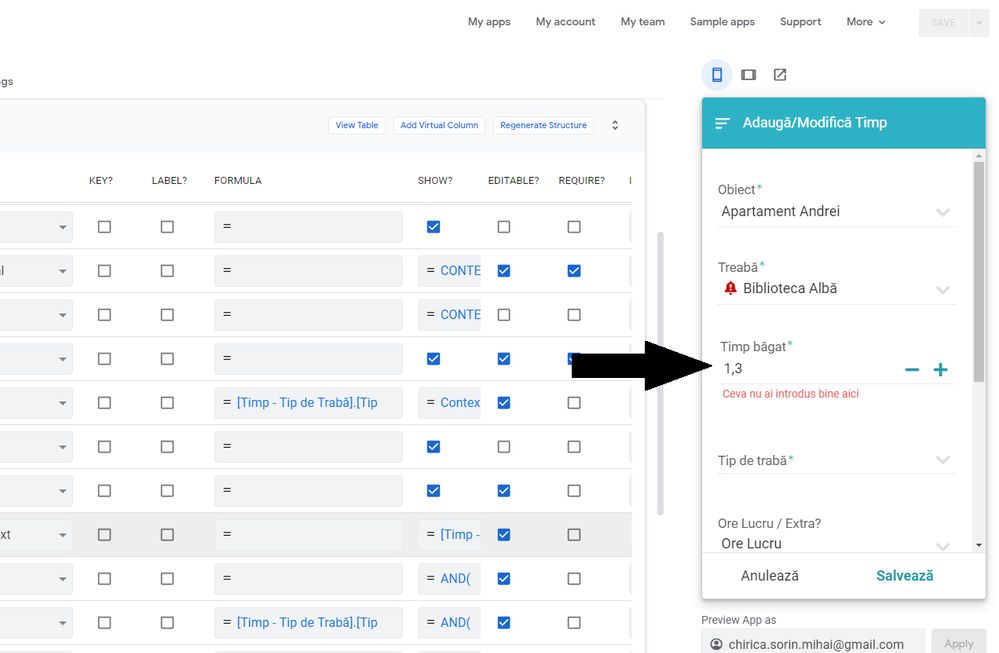

And also when hitting the - button from there back it goes though:

- 2.00 - 2 - 1.75 - 1.5 - 1.3 - 1.25 - 1.00 - 1 - 0.75 - 0.5 - 0.3 - 0.25 - 0.00 - 0

No idea why this is happening. I can’t think of what could have happened on my side, since I haven’t been working on it for a while now, and looking at the way it is set up, everything looks ok but in practice there is this bug.

Any clues?

Thank you ![]()

- Labels:

-

Errors

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Clearly a bug. Please notify support@appsheet.com.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Supports response when I submitted this bug was a parsing issue. Then I was asked where you set the increment value so…

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Gak!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

same…just same…

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I looked at the ticket. there has been some internal back-and-forth. It’s going to development as a bug. Lemme know if you don’t hear back in a reasonable amount of time.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If dev is gonna be digging around the increment field maybe they should take a look at this while they are at it too, decent number of votes and its been there for a yr and a half ![]()

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This was a regression related to a recent attempt to fix a localization issue. We have a fix pending (most likely will be released tomorrow) and increased test coverage to try to prevent this from recurring.

@Austin_Lambeth Agreed on the step size validation, this never made much sense to me either. I’ll take a closer look.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Had a ticket in about step size and validation where step size or decimal digits fields would determine validity and it would just pick the less precise of the 2 values. Never really had a finalized response on that with support but.

-

Account

1,676 -

App Management

3,097 -

AppSheet

1 -

Automation

10,317 -

Bug

981 -

Data

9,674 -

Errors

5,730 -

Expressions

11,775 -

General Miscellaneous

1 -

Google Cloud Deploy

1 -

image and text

1 -

Integrations

1,606 -

Intelligence

578 -

Introductions

85 -

Other

2,900 -

Photos

1 -

Resources

537 -

Security

827 -

Templates

1,306 -

Users

1,558 -

UX

9,109

- « Previous

- Next »

| User | Count |

|---|---|

| 38 | |

| 27 | |

| 23 | |

| 23 | |

| 13 |

Twitter

Twitter